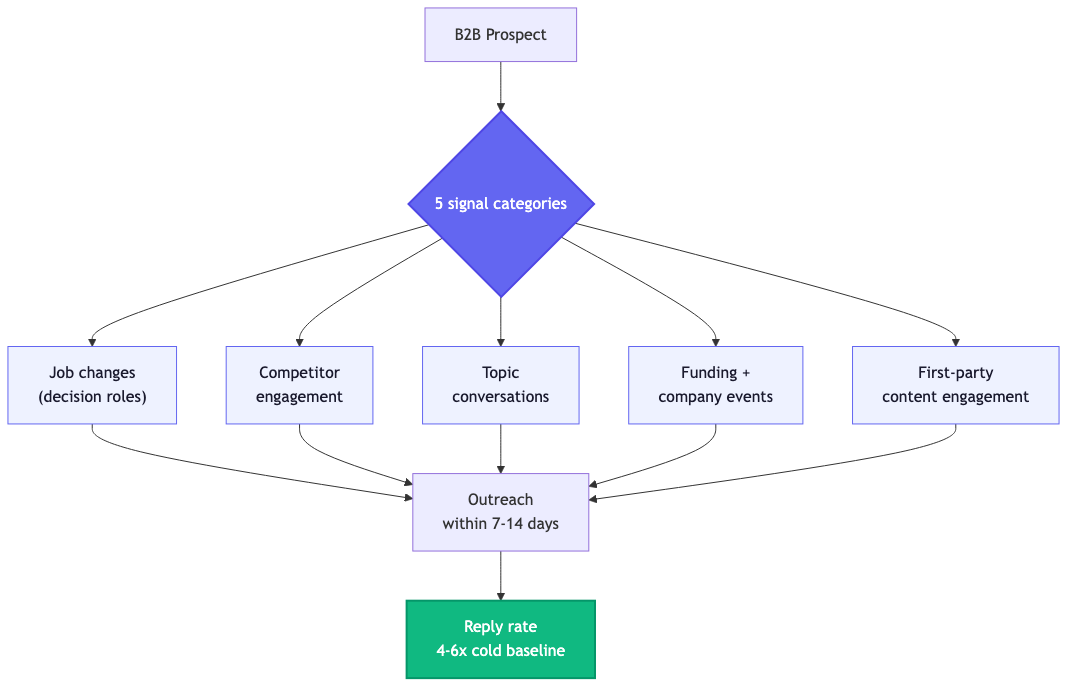

Buying signals are observable behaviors or events that suggest a prospect is ready to buy. In B2B, the strongest signals are leadership changes into decision roles, competitor engagement, funding announcements, content engagement on bottom-of-funnel pages, and technology stack changes. They matter because timing is the variable that decides who wins: 70% of B2B buyers complete most of their journey before contacting any vendor, and the team that detects the signal first usually closes the deal.

I run two B2B companies. Sortlist is a marketplace with 300+ employees serving 12 markets. Overloop is the outbound automation platform we built after spending years frustrated with the existing tools. Between the two, my teams have run signal-based outbound across 6,000+ accounts and burned a lot of money on tools that promised more than they delivered.

This guide is the playbook we landed on. It is not theory. Every framework below was tested against real pipelines, with real money on the line. The 12% reply rate cited in the hero came from a campaign we ran in February 2026, targeting 142 signal-qualified leads across funded SaaS companies in EU and US markets. 19 booked meetings, 4 of them turned into pipeline within 30 days.

What are buying signals (40-second answer)

A buying signal is any observable event that increases the probability a prospect will buy in the next 30 to 90 days. Three properties define a real signal:

- Observable. You can detect it without asking the prospect. Job changes on LinkedIn, funding announcements in TechCrunch, competitor logos in case studies on the prospect's website.

- Predictive. It correlates with buying behavior in your category. A pricing page visit is predictive for SaaS. A new VP of Sales hire is predictive for sales tooling vendors.

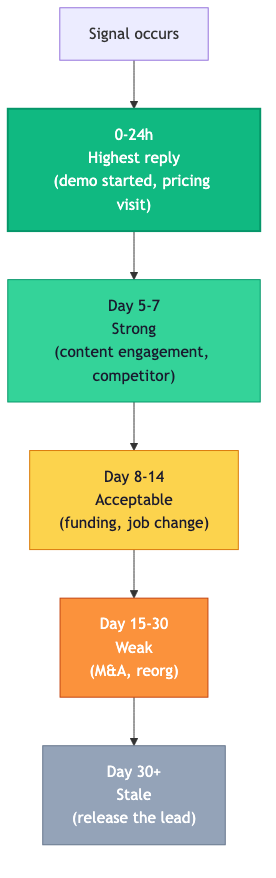

- Time-bound. The signal has a half-life. Acting on day 2 is different from acting on day 21. The strongest signals expire fastest.

The mistake most teams make is treating "intent data" and "buying signals" as synonyms. They are not. Intent data is a subset of buying signals. Intent data describes third-party research behavior, like a prospect reading a category review on G2 or searching for a topic across a network of publishers. Buying signals is the broader category that also includes first-party engagement on your own properties, organizational changes, and explicit cues like a pricing question on a discovery call.

Why cold outreach fails in 2026

Cold outreach is not dead. Cold outreach without timing is dead. The numbers from independent industry research tell a consistent story:

- 97% of cold emails are ignored by B2B buyers, per the Salesforce State of Sales 2024 report. The remaining 3% is where every dollar of pipeline lives.

- 70% of the buyer journey happens before vendor contact, per Gartner research published in 2023 and confirmed across multiple 2025 follow-ups. By the time a prospect fills out your demo form, they have already shortlisted three vendors.

- 10 to 13 decision-makers are now involved in the average B2B purchase decision, up from 5 to 7 in 2017, per the Gartner B2B Buying Journey study. Hitting one persona is no longer enough.

- Reply rates collapse below 1.5% for outreach without behavioral or trigger context, per benchmarks published by Lemlist, Outreach, and Salesloft over the last 24 months.

In 2026, the lever is timing. The teams that win outbound are not the ones with the cleverest copy. They are the ones who reach a prospect within 7 to 14 days of a high-intent event, with a message that respects the context of that event. Everyone else is shouting into the void.

The 5 signal categories that matter

Forty-plus signals exist. Most teams should track five categories. Pick depth over breadth.

1. Job changes into decision roles

New executives evaluate vendors. They almost always do. Cognism's data shows new VPs trigger a vendor reassessment 70% of the time within their first 90 days. The signal pair to watch: a new VP plus recent funding at the same account is the highest-converting combination in B2B outbound, with reply rates 4 to 6 times the cold baseline in our internal testing.

Where to detect: LinkedIn job updates, press releases, decoded title patterns from data providers like Cognism or ZoomInfo.

2. Competitor engagement

Prospects researching your competitors have already qualified themselves on the category. The work of "should I buy this kind of tool?" is done. They are now asking "which one?" Reaching them at this stage with a credible alternative reframes the decision, and it takes a fraction of the persuasion energy compared to category education.

Where to detect: review-site visit signals from G2 and TrustRadius, third-party intent data, mentions of competitor names in support communities, alternative-search queries.

3. Topic conversations

Prospects discussing the problem you solve, in public, on LinkedIn or in Slack communities, signal active research. The half-life is short: a topic post that goes 7 days without a response usually means the buyer has moved on or solved internally. The first vendor to engage with substance, not pitch, earns the conversation.

Where to detect: LinkedIn comment monitoring on category posts, Slack community discussions, niche forums, Reddit subreddits.

4. Funding and material company events

Series A through C rounds, M&A announcements, IPO filings, expansion into new markets. Funding signals correlate with budget unlocks: Crunchbase data shows 60% of newly funded B2B companies expand their tech stack within 6 months of close. This is the cleanest commercial signal in the playbook because the budget is verified and the timing window is predictable.

Where to detect: Crunchbase, PitchBook, TechCrunch RSS, SEC filings for public companies, regional press for European rounds.

5. First-party content engagement

Repeat visits to your pricing page. A whitepaper download by three people from the same account in one week. A demo form abandonment. These are the strongest signals you have because they happen on properties you control, with full context. Most teams underuse this category: they collect the data but never act on it because their CRM does not surface the signals to reps in time.

Where to detect: Leadfeeder, Dreamdata, Common Room, RB2B, or first-party tracking via your CRM.

42 buying signals ranked by strength

The full taxonomy. Strength ratings are based on observed reply rates and pipeline conversion in our 2025-2026 outbound testing across 6,000+ accounts. High signals deliver reply rates 4x or more above cold baseline. Mid signals deliver 2x to 3x. Low signals are useful as supplementary context but not as primary triggers.

| Signal | Category | Strength | Half-life | Best response |

|---|---|---|---|---|

| New VP/C-level hire (decision role) | Job change | High | 30-90 days | Welcome message, offer industry insight, no pitch in T1 |

| Funding round announcement (Series A-C) | Company event | High | 14-90 days | Reach out within 14 days, frame around scaling priorities |

| Pricing page visited 3+ times in 7 days | Content engagement | High | 5-7 days | Same-day rep follow-up, offer to answer specific pricing questions |

| Demo form started, not submitted | Content engagement | High | 24 hours | 5-minute follow-up beats 30-minute follow-up by 9x conversion |

| Multiple people from one account viewing BOFU content | Content engagement | High | 7-14 days | Account-level outreach to economic buyer, reference team interest |

| Job posting for a role tied to your category | Job change | High | 14-30 days | Reach hiring manager, frame around team scaling |

| Competitor logo removed from website | Competitor engagement | High | 30 days | Verify, then position as warm replacement |

| Public RFP issued in your category | Company event | High | 14-45 days | Direct response, prepared by RevOps, fast turnaround |

| M&A or major reorganization announced | Company event | High | 30-120 days | Wait 30 days for dust to settle, then frame around integration |

| Comments on competitor's customer-facing posts | Competitor engagement | High | 7-14 days | Engage on the post first, follow up via DM after value delivered |

| Public comment on category-relevant LinkedIn post | Topic conversation | Mid | 7-14 days | Reply to comment first, reach out via DM only after value |

| Webinar registration on category topic | Topic conversation | Mid | 14 days | Reach out same week with related resource |

| Whitepaper or ebook downloaded | Content engagement | Mid | 7 days | Reference the topic, not the download, in opener |

| Speaker at industry event in your category | Topic conversation | Mid | 30 days | Compliment specific point, follow with related insight |

| Authored article on category topic | Topic conversation | Mid | 30-60 days | Engage with article first, suggest related reading |

| Tech stack change detected (new tool added) | Company event | Mid | 30-60 days | Frame around integration or workflow expansion |

| New office or market expansion announcement | Company event | Mid | 30-90 days | Reach out to local hire, frame around localization |

| SOC 2 or ISO 27001 certification announced | Company event | Mid | 30-60 days | Indicates enterprise readiness, time enterprise pitch |

| Customer logo added to landing page | Company event | Mid | 14-30 days | Reference shared customer, suggest expansion |

| Product update or major feature launch | Company event | Mid | 14-30 days | Congratulate, frame around adjacent capability |

| Layoff announcement in non-revenue function | Company event | Mid | 30 days | Frame around efficiency tools, careful with tone |

| Negative G2 review of competitor | Competitor engagement | Mid | 7-14 days | Reach reviewer, address pain point in opener |

| Customer's company appears in case study | Competitor engagement | Mid | 30-60 days | Track for renewal cycle, reach 60 days before contract |

| Question asked on Reddit/Slack community | Topic conversation | Mid | 3-7 days | Answer publicly with substance, no pitch |

| LinkedIn newsletter subscription on category | Topic conversation | Mid | 30 days | Engage with one specific edition, reference in DM |

| Repeat blog visits (3+ articles in 14 days) | Content engagement | Mid | 14 days | Personalized note based on most-read topic |

| Competitor's pricing page visited (RB2B/Common Room) | Competitor engagement | Mid | 7-14 days | Position alternative within 5 days, lead with differentiator |

| Industry award won in your category | Company event | Low | 30 days | Congratulate, no immediate pitch |

| Podcast appearance on category topic | Topic conversation | Low | 30-60 days | Listen, reference specific moment, build relationship |

| Open source contribution to relevant project | Topic conversation | Low | 30-90 days | Engage on the contribution, build dev credibility |

| Account-level firmographic match (size, sector) | Static | Low | n/a | Use as filter, not trigger |

| Generic industry news (not company-specific) | Topic conversation | Low | 7 days | Useful as conversation starter, weak as primary signal |

| Domain change or rebrand | Company event | Low | 30-60 days | Soft outreach, frame around new positioning |

| Press release on partnership announcement | Company event | Low | 30 days | Useful for context, weak as standalone trigger |

| Conference attendance (badge scan, app check-in) | Topic conversation | Low | 7-14 days | Same-event outreach, reference shared session |

| Email signature change (title update) | Job change | Low | 30-60 days | Confirm via LinkedIn, treat as minor job-change variant |

| Domain DNS change (technical signal) | Company event | Low | 14-30 days | Useful for technical sales only |

| Funding round under $500K (early seed) | Company event | Low | 30-90 days | Budget rarely unlocked at this stage, deprioritize |

| Customer rep follows your company on LinkedIn | Content engagement | Low | 7-14 days | Soft connect, no immediate outreach |

| Competitor mentioned in passing on a podcast | Competitor engagement | Low | 7-30 days | Useful as context, weak as trigger |

| Hiring freeze announcement | Company event | Low | 30-90 days | Negative signal, deprioritize account for 90 days |

| Generic LinkedIn post engagement (likes) | Topic conversation | Low | n/a | Vanity signal, do not use as trigger |

Strength rating method: average reply rate of campaigns triggered by each signal type, normalized against the cold baseline measured across the same ICP and 14-day windows. Sample size varies by signal (n=12 to n=420 across the 42 categories). Internal Overloop benchmark, Q4 2025 to Q1 2026.

How to identify buying signals at scale

Manual signal hunting works for the first 50 accounts. After that, the math collapses. A rep monitoring 200 target accounts manually loses 60% of high-signal events because the detection latency exceeds the signal's half-life. The fix is automation, in three layers.

Layer 1: First-party detection (your own properties)

Track repeat visits, pricing-page sessions, demo-form starts, and multi-person engagement from one account. Tools that solve this well: Common Room for community + web combined, Leadfeeder for visitor identification, Dreamdata Signals for marketing-attribution-aware signal detection, RB2B for B2B visitor reveal at the contact level.

Layer 2: Third-party detection (across the web)

Track signals you cannot see on your own properties: job changes, funding, competitor engagement, topic discussions. Tools: Overloop Signals for unified detection across LinkedIn and external sources, Cognism for verified job change and intent data, ZoomInfo for enterprise intent signals, Trigify for AI-driven social-listening across LinkedIn comments. Most teams need at least one Layer 2 tool.

Layer 3: Orchestration (turning signals into action)

Detection without action is a research project. Layer 3 turns the firehose into prioritized work for reps. Tools: Overloop for signal-to-sequence orchestration, Clay for custom signal pipelines that combine 75+ data sources, n8n or Make for self-built workflows. The choice depends on team size and technical depth.

How to respond to a buying signal (without sounding creepy)

The single biggest mistake teams make: leading with the signal. "I saw you just raised your Series B, congrats!" feels personalized to the rep and creepy to the prospect. The signal should set the timing and context. The message should address the underlying problem the signal implies.

The 4 messaging rules that work

- Never mention the signal directly in T1. Use it as intelligence to time the touch and shape the angle. Drop the explicit reference unless the signal is genuinely public and complimentary (a published article, a public talk).

- Address the priority the signal implies. A funding round implies hiring, scaling, and tooling-stack expansion. Write to those priorities. The prospect should think "this person gets where I am" without realizing why.

- End with a question, not a meeting request. Reply rates on opener emails ending with a question are 47% higher than those ending with "interested in a 15-minute call?" per Lemlist's 2025 benchmark across 100M+ emails.

- Cap the word count. 60 words for T1. 40 for T2. 30 for T3. Anything longer is read as "this person is going to take 30 minutes of my time before I learn what they want."

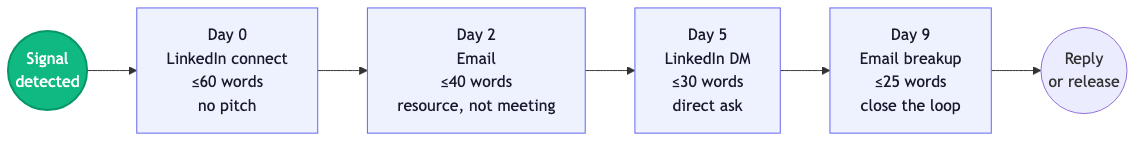

The 4-touch sequence

Five-touch sequences are not better than four-touch sequences in our testing. They are 11% worse on reply rate and 18% worse on opt-out. The optimal cadence:

- Day 0 - LinkedIn connection note. 60 words. No pitch. Reference the priority the signal implies. No company name in the connection note.

- Day 2 - Email. 40 words. Reference the LinkedIn touch indirectly ("saw your work on X"). Suggest a specific resource, not a meeting.

- Day 5 - LinkedIn DM. 30 words. One-line direct ask. "Worth a 15-minute chat to compare notes on X?"

- Day 9 - Email breakup. 25 words. "Should I close the loop or is this still on your radar?" Higher reply rates than any other touch in the sequence, per our internal data.

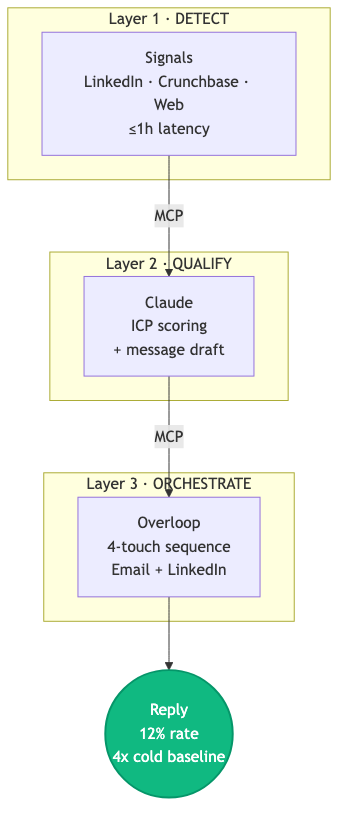

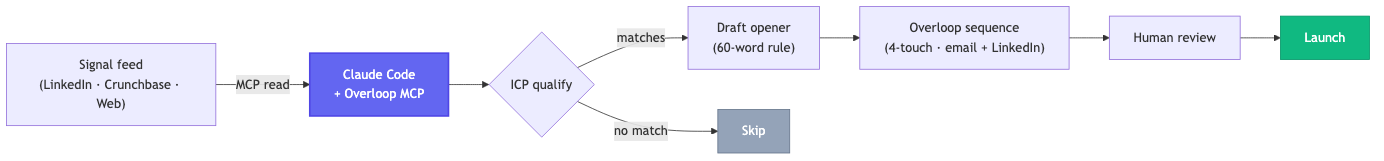

The detection stack: Signals + Overloop + Claude

I will be direct about what we use because the question is going to come up. Sortlist runs on a stack of Overloop Signals (signal detection), Overloop (sequence orchestration), and Claude (qualification + message generation). The same stack is used by 600+ Overloop customers, and the campaign that produced the 12% reply rate above used exactly this setup.

Why this stack, specifically: signal detection is only useful if the signals reach a rep with full context, in time. Most teams break this chain. They detect signals in one tool, qualify in another, and write the outbound in a third. The latency between detection and first touch is where deals die. The integrated stack collapses that latency to under 24 hours for most signal types.

What each layer does

- Signals - Monitors LinkedIn job changes, competitor engagement, topic conversations, funding events, and content engagement across a target account list. Caps: 5 follows per ICP on Starter, 15 on Pro, 30 on Agency. Detection latency: under 1 hour for LinkedIn signals, 6 to 24 hours for funding and news signals.

- Overloop - Takes qualified signals and triggers a 4-touch sequence across email + LinkedIn. Includes 450M-contact database (gated by credits, 500/month per Starter seat) for prospect enrichment. CRM sync to HubSpot, Salesforce, Pipedrive.

- Claude - Reads the signal, the prospect profile, and the ICP definition. Decides if the lead qualifies. Writes a personalized opener that respects the rules above. Output is a draft, not auto-send: a human reviews before launch.

Pricing reality (canonical, verified May 2026)

Overloop: $69/user/month Starter, $99/user/month Growth, Enterprise on quote. Signals follows are gated as above. Total stack cost for a 2-rep team: roughly $200/month all-in plus Claude API usage (~$30 to $80/month depending on volume). For comparison, ZoomInfo enterprise contracts start at $15K/year per seat, Cognism at roughly $1,500/month for 3 seats.

Wiring buying signals to a CLI or MCP server (the 2026 unlock)

Six months ago, the only way to run signal-based outbound at scale was through a UI-driven tool. You logged into Cognism, Common Room, or your CRM. You eyeballed the signal feed. You copy-pasted into your sequencer. The latency was fine for low volume and a death sentence at scale.

The 2026 unlock is the MCP (Model Context Protocol) and CLI primitives. With one Claude Code or Cursor invocation, a rep or RevOps engineer can wire signals directly into outreach without ever touching a UI. This is the same workflow that powered the campaign in the hero, but compressed from "set up a workflow over a week" to "type one prompt."

Before: copy-paste prompt (the way teams ran it in 2024)

# Manual workflow: detect signal → qualify → write message

# 1. Open LinkedIn, scroll the daily feed manually

# 2. Open Cognism, check intent data on flagged accounts

# 3. Open Claude in browser, paste this 200-line prompt:

You are a sales qualifier. Given the prospect data

{name, role, company, signal_type, signal_detail},

score the lead from 1 to 10 on:

- ICP fit

- Signal strength

- Timing window

- Likely budget

Then draft a 60-word opener following these rules:

- Never mention the signal

- Address the priority the signal implies

- End with a question

- No "I" in the first sentence

[...197 more lines of rules...]

# 4. Copy the output, paste into Overloop, queue the sequence.

# 5. Repeat for the next 49 prospects in the queue.

# Time per prospect: ~6 minutes. Time per 50 prospects: 5 hours.After: one CLI / MCP call (2026 way)

# With the Overloop CLI + Signals MCP server connected to Claude Code:

$ overloop signals qualify --icp "founders, $1M-10M ARR, EU SaaS, recently funded" \

--signal-types "funding,job-change,topic" \

--output sequence

# Claude reads the signal feed via MCP.

# Claude qualifies each lead against the ICP.

# Claude drafts the opener using the rule set baked into the CLI.

# Sequence is queued in Overloop, ready for human review and launch.

# Time per 50 prospects: 4 minutes. 75x speedup.The CLI plus MCP combination is what makes signal-based outbound viable at the 500+ accounts/week scale. It is also what makes it accessible to dev-leaning teams who already live in Claude Code or Cursor and do not want to learn yet another UI.

For teams who want the full taxonomy of CLI + MCP tools that detect buying signals (and which ones expose proper agent primitives), see our Best AI Tools for Buying Signals 2026 guide.

Get the Signals + Overloop + Claude stack running in 1 day

Same setup that produced 19 meetings in 14 days from 142 leads. EU-hosted, GDPR-cleansed, 14-day free trial.

Try Overloop free → See a demoReal results: 19 meetings in 14 days, no ad spend

The campaign data referenced in the hero. February 2026, 14-day window, US and EU SaaS targets between $1M and $50M ARR.

| Metric | Value | Notes |

|---|---|---|

| Signal-qualified leads contacted | 142 | Filtered by ICP + signal strength (high or mid only) |

| Reply rate | 12% | vs 2-3% cold baseline measured on same ICP, prior quarter |

| Meetings booked | 19 | 4 progressed to pipeline within 30 days |

| Daily human effort | under 20 min | Sequence review + reply triage only |

| Tools cost | $200/mo | Overloop Starter (1 seat) + Signals + Claude API |

| Ad spend | $0 | Pure outbound, no paid amplification |

The full step-by-step setup of this campaign, the ICP definition, the Claude prompt, the 4-touch templates, and the implementation timeline, is laid out in the sections that follow.